CrewAI Open Source

The open source, multi-agent platform

The free, code-first foundation for building, orchestrating and scaling autonomous AI agents with 100s of tools out of the box. Used by 63% of the Fortune 500.

Loved by AI builders. Trusted by AI leaders. Used by 63% of the Fortune 500.

Why CrewAI Open Source?

CrewAI is built in the open to make multi-agent AI accessible to all. With no-code speed and full-code power, it gives developers and teams the freedom to create, orchestrate, and scale agents without barriers. Backed by a growing community of more than 100,000 developers and translated into four languages, CrewAI sets the standard for transparent, reliable, and enterprise-ready AI.

Planning

Planning agent for tasks

Crews of AI agents can take advantage of a specialized planning agent that creates a step-by-step plan for all tasks and shares it with the crew. The new plan-execute pattern lets agents draft, refine, and follow structured plans that adapt as work unfolds.

Tools

100s of open-source tools out of the box

CrewAI provides AI agents with hundreds of open-source tools out of the box — search the Internet, interact with websites, query vector databases, run code, and much more. With first-class support for MCP (Model Context Protocol), Custom MCP Servers, and native sandbox tools for E2B and Daytona, agents can safely execute code and call any tool you need, anywhere.

Memory

Sophisticated memory-management

CrewAI implements a sophisticated memory-management system that gives AI agents access to shared short-term, long-term, entity, and contextual memory. Choose from storage backends including Qdrant Edge, ChromaDB, Mem0, and SQLite, with hierarchical memory isolation via automatic root_scope so multi-tenant crews stay safely separated.

Knowledge

Agentic RAG of knowledge sources

Agentic RAG combines a broad range of knowledge sources, files, websites, and vector databases — with intelligent query rewriting to optimize retrieval. Path and URL validation plus SSRF protections ship by default, so agents pull from the right sources without putting your stack at risk.

Collaboration

Agents that collaborate

CrewAI transforms a set of AI agents into a crew of AI agents that collaborate via context sharing and delegation to perform complex tasks. Native Agent-to-Agent (A2A) protocol support lets crews coordinate across processes, services, and clouds — with poll, stream, and push update mechanisms baked in.

Checkpointing

Capture runtime state at every step

Pause, resume, fork, and replay any crew or flow. CrewAI's checkpointing system captures runtime state at every step, with a tree-view TUI for browsing checkpoints, editable inputs and outputs, and lineage tracking across forks. Bring crashed runs back to life or branch a successful run into multiple variations — all without rewriting your code.

Async

Native async/await support

Run crews, flows, tasks, tools, knowledge, memory, and LLM calls asynchronously with native async/await support. Streaming results flow back in real time from flows and crews, and async context managers make resource cleanup automatic. Write the orchestration you want — CrewAI handles the concurrency.

Agent-to-Agent (A2A)

Connect crews with the A2A protocol

Run crews, flows, tasks, tools, knowledge, memory, and LLM calls asynchronously with native async/await support. Streaming results flow back in real time from flows and crews, and async context managers make resource cleanup automatic. Write the orchestration you want — CrewAI handles the concurrency.

Agent roles

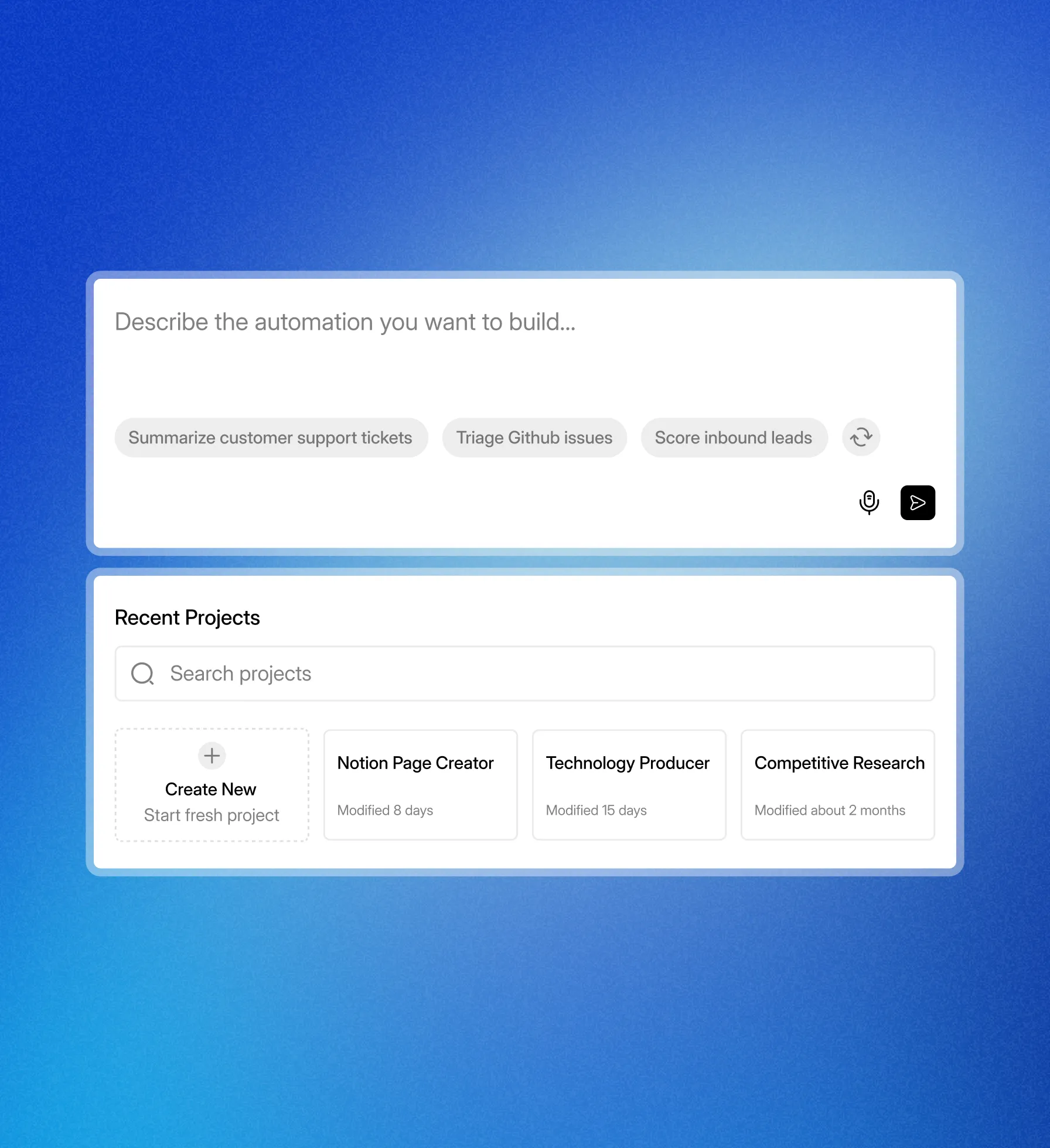

Describe an agents role

Simply describe an AI agent's role, goal, and backstory to get started. Optionally, you can specify its LLM, enable reasoning and memory, attach tools and skills, and configure checkpointing.

Define in YAML, code, or both

Set the LLM and enable reasoning, memory, or skills

Specify tools, knowledge sources, and sandbox environments